“Prompt engineering” is not dead.

But calling modern AI work prompt engineering is starting to sound like calling a Formula 1 team “the steering wheel department.”

Prompts matter. Context matters. Examples matter. All true.

They are just no longer the differentiator.

The shift most teams have not internalized yet

In enterprise settings, the hardest part is not getting a model to produce a good answer. It is getting a system to produce a reliable outcome in the presence of messy data, ambiguous requests, edge cases, and real-world consequences.

That reliability does not come from better wording. It comes from the system around the model.

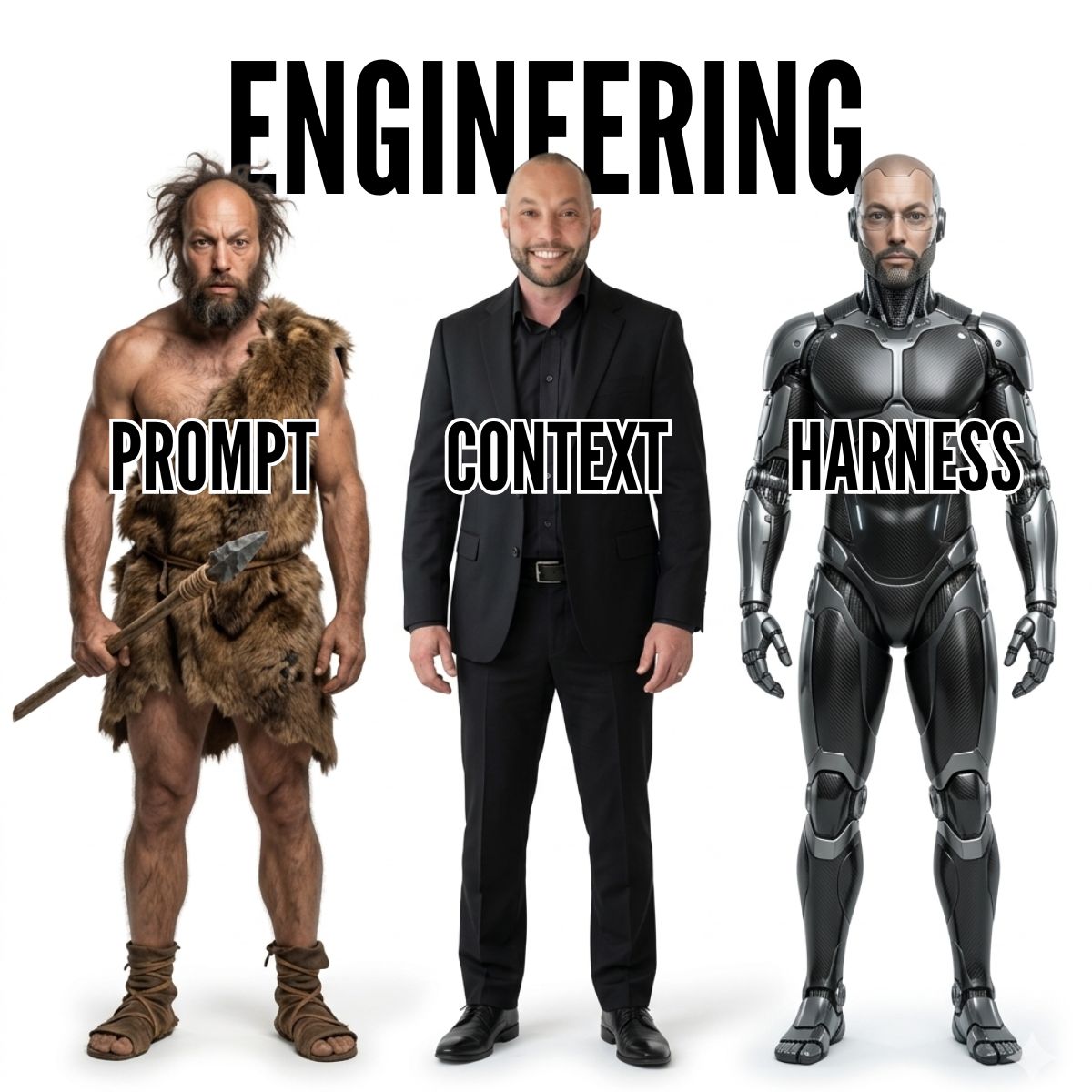

Three eras of AI delivery

1) Prompt era: “maybe if I word it better”

This is where most organizations started. The model is a black box and the main control lever is how you ask.

It is useful. It teaches you what the model can do. But it does not create operational dependability.

2) Context era: “the model needs the right information”

Then we learned the model needs the right information at the right time. Retrieval, grounding, memory, tool access, better context assembly.

Huge improvement, but still not enough. A well-informed model can still confidently walk into a glass door.

3) Harness era: “the model needs adult supervision”

This is the current frontier: building the harness around the model that makes it safe and dependable in real workflows.

The model does reasoning. The harness provides constraints, checks, and control.

What a real harness looks like (in plain language)

Validation and enforcement

Do not just request a schema. Validate it. Check constraints. Detect missing fields. Reject or retry outputs when they are wrong.

Persistent state

Long-running tasks drift. A harness tracks what was done, what is next, what assumptions were made, and what decisions were taken. Without this, agents “forget” and loop.

Controlled execution

If an agent can run code, execute queries, or modify systems, it needs a bounded environment with tests, permissions, and feedback loops. Otherwise you are deploying an intern with production access and no manager.

Tool and permission boundaries

No God Mode. Separate read tools from write tools. Require explicit approvals for high-impact actions. Minimize blast radius by design.

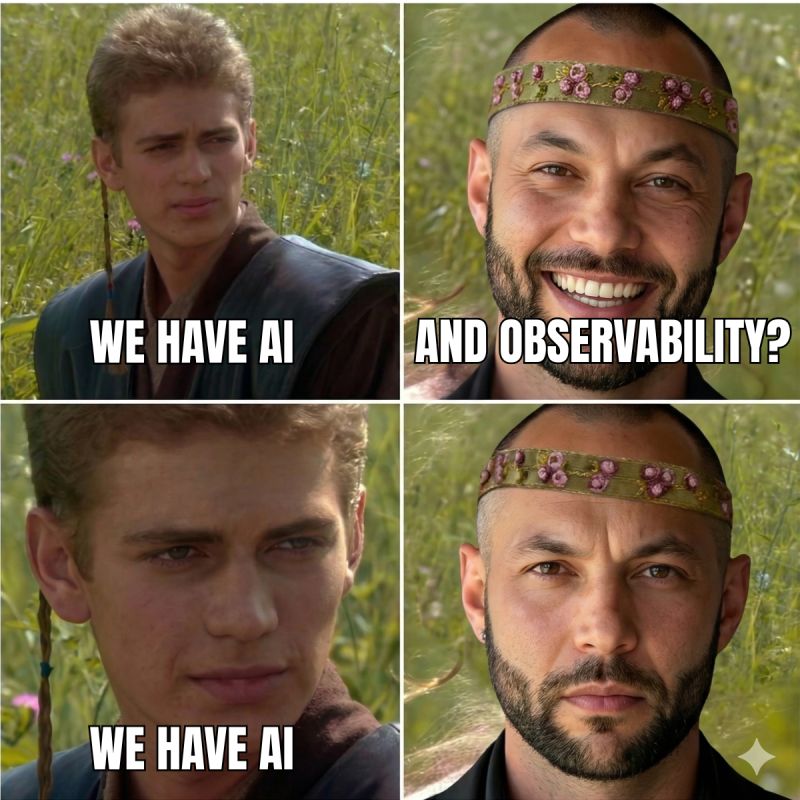

Observability

If you cannot see what the agent did, what tools it used, and why it made a decision, you do not have a system. You have a confident ghost.

Why this matters to executives (not just engineers)

Because the harness is where risk and ROI live.

- Risk is reduced when the system has constraints, approvals, and auditability.

- ROI increases when the system can be trusted to run consistently and scale beyond a handful of demos.

Without a harness, every deployment is a one-off. Reliability is “best effort.” And scaling becomes a sequence of incidents.

How teams get this wrong

- They keep iterating on prompts instead of adding checks and tests.

- They build one giant agent with broad permissions and no containment.

- They skip logging and then cannot diagnose failures, which kills trust.

- They treat governance as a meeting instead of an engineering artifact.

A practical starting point

If you want to move from demos to dependable systems, start small and system-first:

- Choose one workflow where errors have a clear cost.

- Add a validator before you add another prompt trick.

- Introduce state (even a simple task ledger) to reduce drift.

- Split permissions and require approvals for writes.

- Turn on logs you can actually review.

The bottom line

Prompting and context are table stakes. The advantage now belongs to the teams who can build the rulebook, the firewall, and the panic button around the model.

That is harness engineering, and it is what turns AI from a clever assistant into a dependable enterprise capability.