“We gave everyone AI tools.” … and then expected magic to happen.

But what happened is not what we expected.

Last quarter, I spoke with a leadership team frustrated that their expensive AI rollout “wasn’t delivering productivity gains.” Their employees had access. Licenses were paid. Tools were live.

But usage was shallow. Impact was minimal.

Why?

Because they skipped one inconvenient step: teaching people how to actually use it.

AI is not a feature you simply turn on

A lot of organizations are treating AI like a software rollout. Buy the licenses. Send the announcement. Add a few links to the intranet. Maybe host a lunch-and-learn. Then wait for productivity to show up in the dashboard.

That mindset misses what makes AI different.

AI is not like email. It is not like Slack. It is not automatically intuitive just because it has a chat box. A chat interface makes AI look simple, but the simplicity is deceptive. The value does not come from typing into the box. The value comes from knowing what to ask, how to frame the work, how to judge the output, and how to integrate that output back into the business process.

That is a skill. Not a feature.

The brilliant intern problem

A better analogy is hiring a brilliant intern who does exactly what you ask and nothing you meant.

If you give that intern vague instructions, missing context, unclear success criteria, and no examples, you should not be surprised when the output is weak. The intern may be capable, but the assignment was not.

AI behaves the same way. It can draft, analyze, summarize, classify, compare, brainstorm, critique, and automate pieces of work. But it needs direction. It needs context. It needs constraints. It needs a definition of good. It needs someone who knows when the answer is useful, when it is incomplete, and when it is confidently wrong.

No training leads to bad prompts. Bad prompts lead to bad outputs. Bad outputs lead to the predictable executive conclusion: “AI doesn’t work.”

And just like that, a million-dollar initiative quietly dies.

Access without enablement creates shallow usage

When employees are handed AI tools without real enablement, most usage stays on the surface. People rewrite emails. Summarize documents. Generate outlines. Maybe clean up a slide. Those use cases are fine, but they rarely create transformational value on their own.

The bigger opportunity is helping people rethink the work itself.

Can AI reduce the number of manual handoffs in a workflow? Can it help a team analyze customer feedback faster? Can it turn messy operational data into better decisions? Can it create first drafts of recurring reports from governed sources? Can it help a manager spot risks before a status meeting? Can it help a service team resolve issues with more context and less swivel-chair work?

Those outcomes do not happen because a license exists. They happen when people understand where AI fits, where it does not, and how to redesign their work around it.

The organizations winning are investing in capability

The organizations getting real value from AI are not necessarily the ones with the fanciest tools. They are the ones investing in capability.

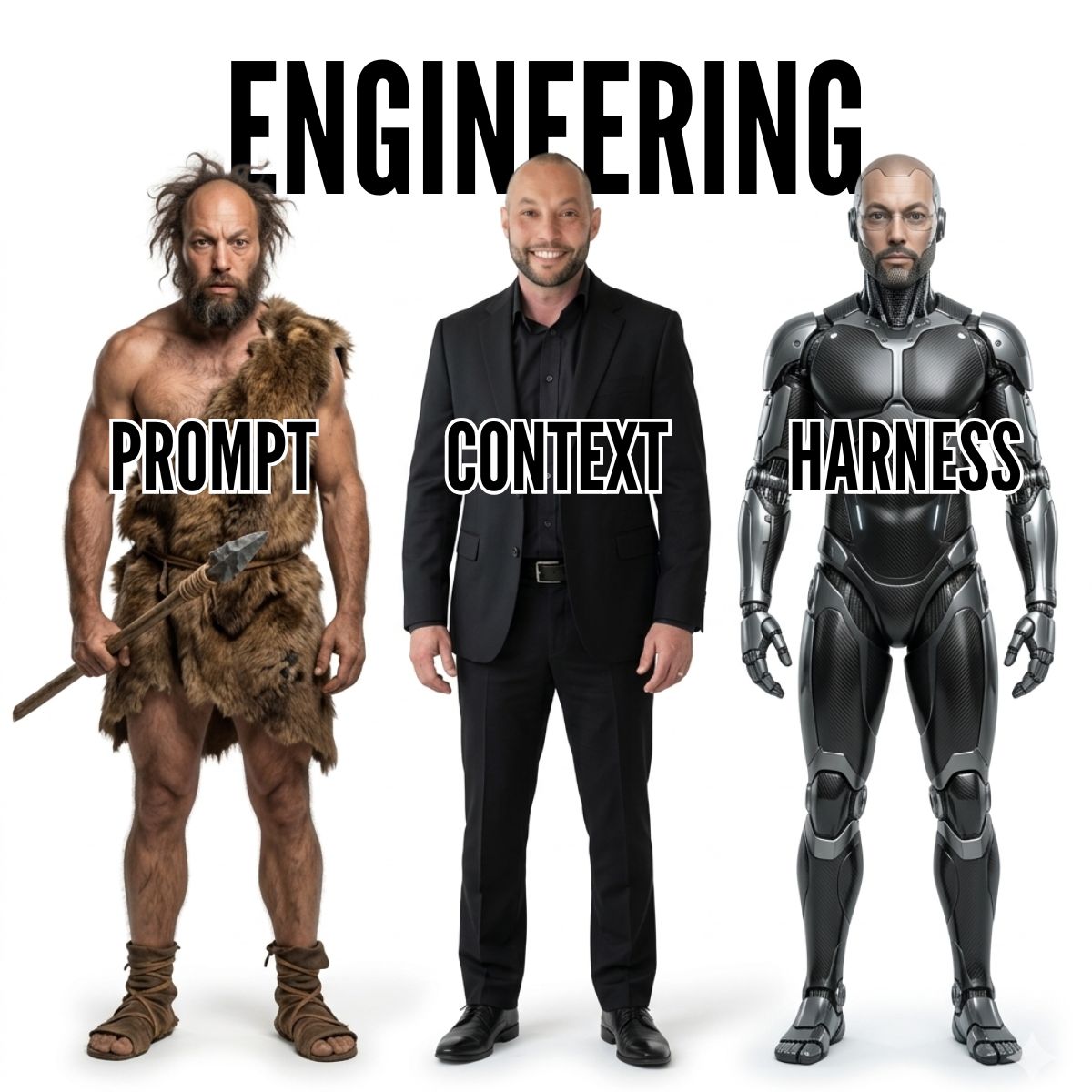

That usually includes four things.

- Prompt literacy: teaching people how to give AI useful context, constraints, examples, roles, formats, and evaluation criteria.

- Workflow redesign: identifying where AI can remove low-value work instead of merely making existing work slightly faster.

- Use-case-specific training: moving beyond generic demos and teaching teams how AI applies to sales, operations, finance, service, analytics, product, and leadership decisions.

- Cultural permission to experiment: giving employees room to test, share, fail safely, and build confidence without pretending every attempt has to be production-ready.

When people actually understand how to use AI, output quality improves quickly. Time savings become easier to measure. Teams start building internal leverage instead of dependency. AI stops being a novelty and starts becoming part of how the organization learns.

The risk of untrained AI usage is real

There is another side to this: untrained employees can create risk just as quickly as they create drafts.

They may hallucinate their way into bad decisions. They may expose sensitive data. They may create inconsistent workflows that cannot scale. They may trust outputs they should challenge, or reject outputs they could improve with better framing. They may unknowingly create compliance, security, or quality issues because nobody taught them the boundaries.

That is why AI training cannot be limited to “here are ten prompts.” It has to include judgment. What information should never be pasted into a public tool? When should an output be verified? What decisions require human review? How should teams document successful use cases? What does good look like for this specific function?

Without that layer, AI adoption becomes random. Some employees become power users. Others avoid it. Some create real leverage. Others create mess. Leadership sees inconsistent results and assumes the technology failed.

But often, the technology was never the main problem. The learning curve was ignored.

AI exposes whether your organization knows how to learn

AI is not replacing your workforce.

It is exposing whether your organization knows how to learn.

That is the uncomfortable truth. The companies that treat AI as a procurement exercise will get procurement-level results. The companies that treat AI as a capability-building exercise will get something much more valuable: employees who can rethink work, improve outputs, reduce waste, and create leverage inside the business.

Buying the tool is the easy part.

Teaching the organization how to use it is where the real transformation starts.